Understanding Camera Optics & Smartphone Camera Trends, A Presentation by Brian Klug

by Brian Klug on February 22, 2013 5:04 PM EST- Posted in

- Smartphones

- camera

- Android

- Mobile

Recently I was asked to give a presentation about smartphone imaging and optics at a small industry event, and given my background I was more than willing to comply. At the time, there was no particular product or announcement that I crafted this presentation for, but I thought it worth sharing beyond just the event itself, especially in the recent context of the HTC One. The high level idea of the presentation was to provide a high level primer for both a discussion about camera optics and general smartphone imaging trends and catalyze some discussion.

For readers here I think this is a great primer for what the state of things looks like if you’re not paying super close attention to smartphone cameras, and also the imaging chain at a high level on a mobile device.

Some figures are from of the incredibly useful (never leaves my side in book form or PDF form) Field Guide to Geometrical Optics by John Greivenkamp, a few other are my own or from OmniVision or Wikipedia. I've put the slides into a gallery and gone through them pretty much individually, but if you want the PDF version, you can find it here.

Smartphone Imaging

The first two slides are entirely just background about myself and the site. I did my undergrad at the University of Arizona and obtained an Optical Sciences and Engineering bachelors doing the Optoelectronics track. I worked at a few relevant places as an undergrad intern for a few years, and made some THz gradient index lenses at the end. I think it’s a reasonable expectation that everyone who is a reader is also already familiar with AnandTech.

Next up are some definitions of optical terms. I think any discussion about cameras is impossible to have without at least introducing the index of refraction, wavelength, and optical power. I’m sticking very high level here. Numerical index refers of course to how much the speed of light is slowed down in a medium compared to vacuum, this is important for understanding refraction. Wavelength is of course easiest to explain by mentioning color, and optical power refers to how quickly a system converges or diverges an incoming ray of light. I’m also playing fast and loose when talking about magnification here, but again in the camera context it’s easier to explain this way.

Other good terms are F-number, the so called F-word of optics. Most of the time in the context of cameras we’re talking about working F-number, and the simplest explanation here is that this refers to the light collection ability of an optical system. F-number is defined as the ratio of the focal length to the diameter of the entrance pupil. In addition the normal progression for people who think about cameras is in square root two steps (full stops) which changes the light collection by a factor of two. Finally we have optical format or image sensor format, which is generally in some notation 1/x“ in units of inches. This is the standard format for giving a sensor size, but it doesn’t have anything to do with the actual size of the image circle, and rather traces its roots back to the diameter of a vidicon glass tube. This should be thought of as being analogous to the size class of TV or monitor, and changes from manufacturer to manufacturer, but they’re of the same class and roughly the same size. Also 1/2” would be a bigger sensor than 1/7".

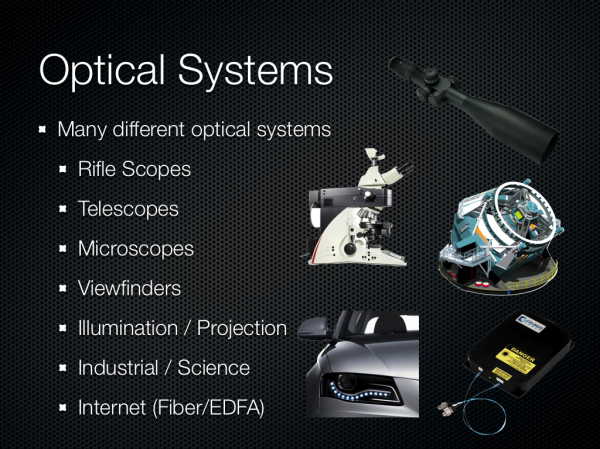

There are many different kinds of optical systems, and since I was originally asked just to talk about optics I wanted to underscore the broad variety of systems. Generally you can fit them into two different groups — those designed to be used with the eye, and those that aren’t. From there you get different categories based on application — projection, imaging, science, and so forth.

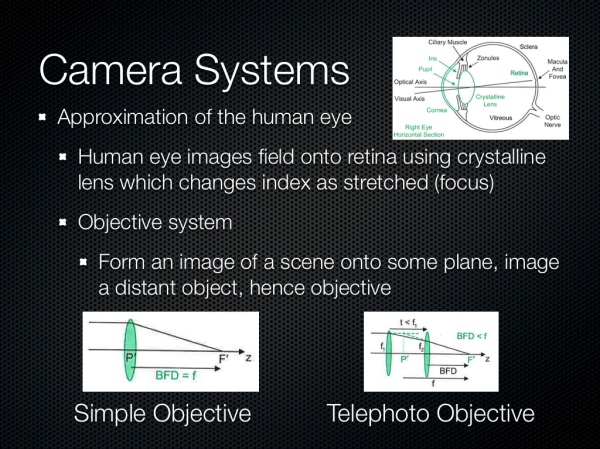

We’re talking about camera systems however, and thus objective systems. This is roughly an approximation of the human eye but instead of the retina the image is formed on a sensor of some kind. Cameras usually implement similar features to the eye as well – a focusing system, iris, then imaging plane.

60 Comments

View All Comments

Sea Shadow - Friday, February 22, 2013 - link

I am still trying to digest all of the information in this article, and I love it!It is because of articles like this that I check Anandtech multiple times per day. Thank you for continuing to provide such insightful and detailed articles. In a day and age where other "tech" sites are regurgitating the same press releases, it is nice to see anandtech continues to post detailed and informative pieces.

Thank you!

arsena1 - Friday, February 22, 2013 - link

Yep, exactly this.Thanks Brian, AT rocks.

ratte - Friday, February 22, 2013 - link

Yeah, got to echo the posts above, great article.vol7ron - Wednesday, February 27, 2013 - link

Optics are certainly an area the average consumer knows little about, myself included.For some reason it seems like consumers look at a camera's MP like how they used to view a processor's Hz; as if the higher number equates to a better quality, or more efficient device - that's why we can appreciate articles like these, which clarify and inform.

The more the average consumer understands, the more they can demand better products from manufacturers and make better educated decisions. In addition to being an interesting read!

tvdang7 - Friday, February 22, 2013 - link

Same here they have THE BEST detail in every article.Wolfpup - Wednesday, March 6, 2013 - link

Yeah, I just love in depth stuff like this! May end up beyond my capabilities but none the less I love it, and love that Brian is so passionate about it. It's so great to hear on the podcast when he's ranting about terrible cameras! And I mean that, I'm not making fun, I think it's awesome.Guspaz - Friday, February 22, 2013 - link

Is there any feasibility (anything on the horizon) to directly measure the wavelength of light hitting a sensor element, rather than relying on filters? Or perhaps to use a layer on top of the sensor to split the light rather than filter the light? You would think that would give a substantial boost in light sensitivity, since a colour filter based system by necessity blocks most of the light that enters your optical system, much in the way that 3LCD projector produces a substantially brighter image than a single-chip DLP projector given the same lightbulb, because one splits the white light and the other filters the white light.HibyPrime1 - Friday, February 22, 2013 - link

I'm not an expert on the subject so take what I'm saying here with a grain of salt.As I understand it you would have to make sure that no more than one photon is hitting the pixel at any given time, and then you can measure the energy (basically energy = wavelength) of that photon. I would imagine if multiple photons are hitting the sensor at the same time, you wouldn't be able to distinguish how much energy came from each photon.

Since we're dealing with single photons, weird quantum stuff might come into play. Even if you could manage to get a single photon to hit each pixel, there may be an effect where the photons will hit multiple pixels at the same time, so measuring the energy at one pixel will give you a number that includes the energy from some of the other photons. (I'm inferring this idea from the double-slit experiment.)

I think the only way this would be possible is if only one photon hits the entire sensor at any given time, then you would be able to work out it's colour. Of course, that wouldn't be very useful as a camera.

DominicG - Saturday, February 23, 2013 - link

Hi Hlbyphotodetection does not quite work like that. A photon hitting a photodiode junction either has enough energy to excite an electron across the junction or it does not. So one way you could make a multi-colour pixel would be to have several photodiode junctions one on top of the other, each with a different "energy gap", so that each one responds to a different wavelength. This idea is now being used in the highest efficiency solar cells to allow all the different wavelengths in sunlight to be absorbed efficiently. However for a colour-sensitive photodiode, there are some big complexities to be overcome - I have no idea if anyone has succeeded or even tried.

HibyPrime1 - Saturday, February 23, 2013 - link

Interesting. I've read about band-gaps/energy gaps before, but never understood what they mean in any real-world sense. Thanks for that :)