Next-Generation CAMM, MR-DIMM Memory Modules Show Up at Computex

by Anton Shilov on June 2, 2023 1:00 PM EST

Dynamic random access memory is an indispensable part of all computers, and requirements for DRAM — such as performance, power, density, and physical implementation — tend to change now and then. In the coming years, we will see new types of memory modules for laptops and servers as traditional SO-DIMMs and RDIMMs/LRDIMMs seem to run out of steam in terms of performance, efficiency, and density.

ADATA demonstrated potential candidates to replace SO-DIMMs and RDIMMs/LRDIMMs from client and server machines, respectively, in the coming years, at Computex 2023 in Taipei, Taiwan, reports Tom's Hardware. These include Compression Attached Memory Modules (CAMMs) for at least ultra-thin notebooks, compact desktops, and other small form-factor applications; Multi-Ranked Buffered DIMMs (MR-DIMMs) for servers; and CXL memory expansion modules for machines that need extra system memory at a cost that is below that of commodity DRAM.

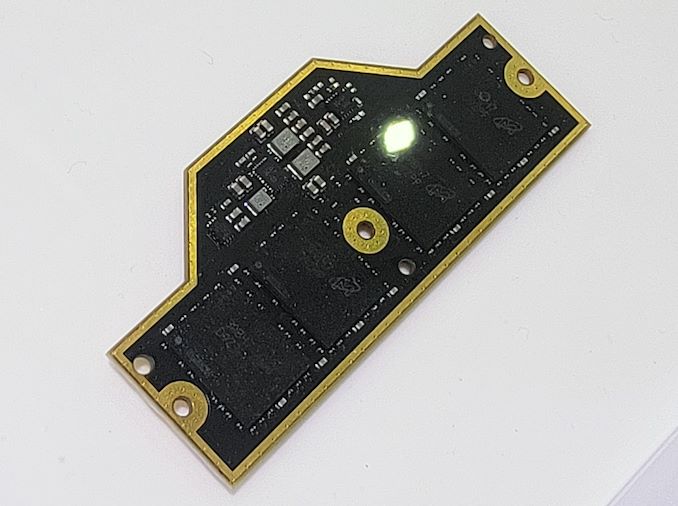

CAMM

The CAMM specification is slated to be finalized by JEDC later in 2023. Still, ADATA demonstrated a sample of such a module at the trade show to highlight its readiness to adopt the upcoming technology.

Image Courtesy Tom's Hardware

The key benefits CAMMs include shortened connections between memory chips and memory controllers (which simplifies topology and therefore enables higher transfer rates and lowers costs), usage of modules based on DDR5 or LPDDR5 chips (LPDDR has traditionally used point-to-point connectivity), dual-channel connectivity on a single module, higher DRAM density, and reduced thickness when compared to dual-sided SO-DIMMs.

While the transition to an all-new type of memory module will require tremendous effort from the industry, the benefits promised by CAMMs will likely justify the change.

Last year, Dell was the first PC maker to adopt CAMM in its Precision 7670 notebook. Meanwhile, ADATA's CAMM module differs significantly from Dell's version, although this is not unexpected as Dell has been using pre-JEDEC-standardized modules.

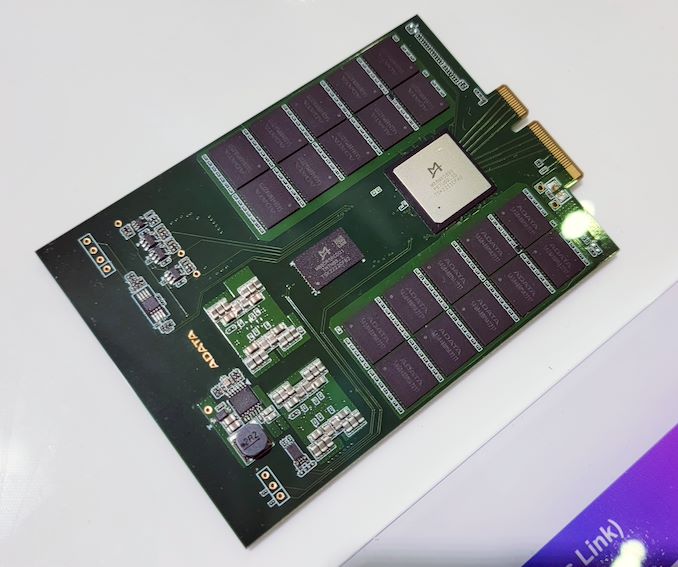

MR DIMM

Datacenter-grade CPUs are increasing their core count rapidly and therefore need to support more memory with each generation. But it is hard to increase DRAM device density at a high pace due to costs, performance, and power consumption concerns, which is why along with the number of cores, processors add memory channels, which results in an abundant number of memory slots per CPU socket and increased complexity of motherboards.

This is why the industry is developing two types of memory modules to replace RDIMMs/LRDIMMs used today.

On the one hand, there is the Multiplexer Combined Ranks DIMM (MCR DIMM) technology backed by Intel and SK Hynix, which are dual-rank buffered memory modules with a multiplexer buffer that fetches 128 bytes of data from both ranks that work simultaneously and works with memory controller at high speed (we are talking about 8000 MT/s for now). Such modules promise to increase performance and somewhat simplify building dual-rank modules significantly.

Image Courtesy Tom's Hardware

On the other hand, there is the Multi-Ranked Buffered DIMM (MR DIMM) technology which seems to be supported by AMD, Google, Microsoft, JEDEC, and Intel (at least based on information from ADATA). MR DIMM uses the same concept as MCR DIMM (a buffer that allows the memory controller to access both ranks simultaneously and interact with the memory controller at an increased data transfer rate). This specification promises to start at 8,800 MT/s with Gen1, then evolve to 12,800 MT/s with Gen2, and then skyrocket to 17,600 MT/s in its Gen3.

ADATA already has MR DIMM samples supporting an 8,400 MT/s data transfer rate that can carry 16GB, 32GB, 64GB, 128GB, and 192GB of DDR5 memory. These modules will be supported by Intel's Granite Rapids CPUs, according to ADATA.

CXL Memory

But while both MR DIMMs and MCR DIMMs promise to increase module capacity, some servers need a lot of system memory at a relatively low cost. Today such machines have to rely on Intel's Optane DC Persistent Memory modules based on now obsolete 3D XPoint memory that reside in standard DIMM slots. Still, in the future, they will use memory on modules featuring a Compute Express Link (CXL) specification and connected to host CPUs using a PCIe interface.

Image Courtesy Tom's Hardware

ADATA displayed a CXL 1.1-compliant memory expansion device at Computex with an E3.S form factor and a PCIe 5.0 x4 interface. The unit is designed to expand system memory for servers cost-effectively using 3D NAND yet with significantly reduced latencies compared to even cutting-edge SSDs.

4 Comments

View All Comments

ballsystemlord - Friday, June 2, 2023 - link

How does the CAMM module connect to the rest of the system?I do not see any pins.

Wereweeb - Friday, June 2, 2023 - link

They're underneath the chipless part of the module. Essentially, it's like a land grid array.Storage Review has some more images of Dell's version of CAMM showing the pins: https://www.storagereview.com/review/dell-camm-dra...

nandnandnand - Saturday, June 3, 2023 - link

Maybe CAMM will really take off after the switch to DDR6, with no DDR6 SO-DIMM option to compete with. Even if there are benefits right now.nonnull - Sunday, June 11, 2023 - link

What's the latency of MR DIMMs? Historically that's been the Achilles' Heel of alternative DRAM interfaces.Ditto, what's the minimum burst length? The moment you go beyond a single cache line you start paying a hefty penalty on random accesses.